Here’s something that flew under the radar at ELCC 2026 in Copenhagen: while most attendees were focused on clinical data, ESMO quietly completed the most comprehensive governance architecture for AI in oncology that exists today.

Not one framework. Three. Each targeting a different type of AI tool. All developed by the same two task forces. Together, they answer the question that’s been hanging over the industry: what does “validated AI” actually mean in oncology?

Why This Matters for Medical Affairs

AI is already in oncology. Pathology labs are scoring biomarkers with algorithms. Hospitals are extracting data from medical records with LLMs. Chatbots are answering patient questions. The technology isn’t waiting for guidelines.

But when an HCP asks how an AI-based companion diagnostic was validated, what do you say? When your organization launches an LLM-powered medical information tool, how do you know what evidence is required? Until recently, there was no shared language for these conversations. ESMO just created one.

The Three Frameworks

ESMO’s Real World Data & Digital Health Task Force and Precision Oncology Task Force built three interconnected frameworks — each covering a different layer of the AI stack.

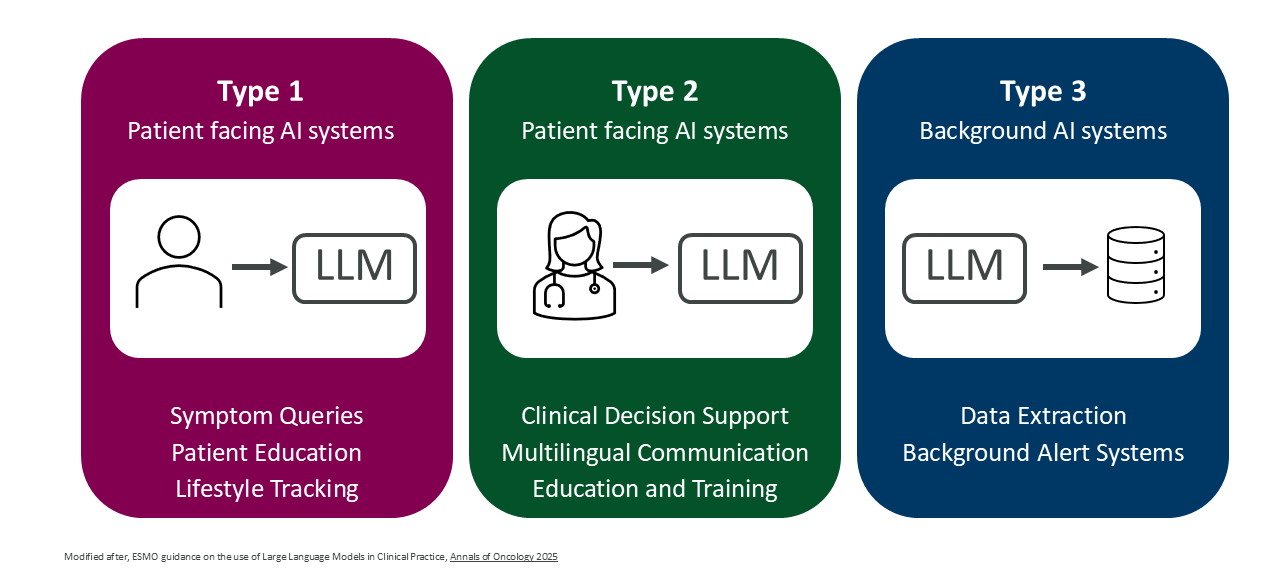

ELCAP governs Large Language Models in clinical practice. Published in Annals of Oncology (2025), it organizes LLM applications into three tiers based on who uses them and for what:

- Type 1 (Patient-facing): Chatbots for symptom queries and education. Must operate within supervised pathways with clear escalation routes. Not a replacement for professional advice.

- Type 2 (HCP-facing): Clinical decision support, multilingual communication, medical education. Requires formal validation. The critical rule: physicians keep full accountability. No delegating treatment decisions to the model.

- Type 3 (Background): Data extraction, automated summaries, trial matching inside EHR systems. Requires pre-deployment testing and ongoing monitoring for bias and model drift.

Source: Annals of Oncology (2025)

The practical insight: the same LLM technology can fall into different tiers depending on how it’s deployed — and the evidence requirements change accordingly.

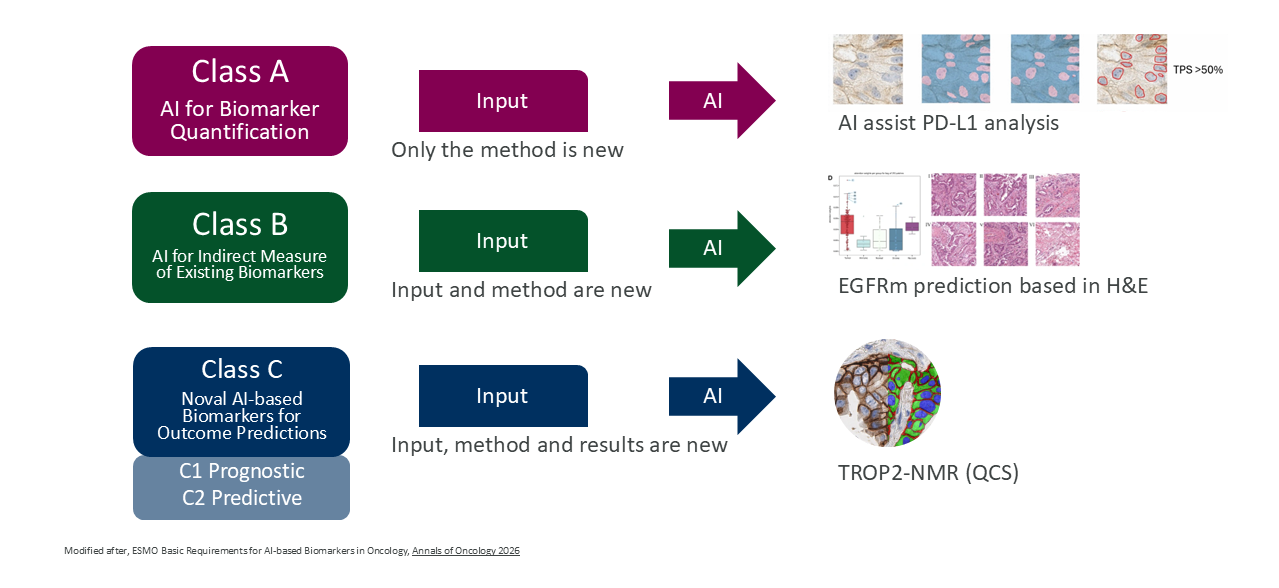

EBAI tackles AI-based biomarkers. Published in Annals of Oncology (2026), it defines four validation classes that scale with clinical risk:

- Class A: Automating established biomarkers — like AI-scored PD-L1 or HER2. Needs concordance studies against expert human readers.

- Class B: Predicting known biomarkers from alternative data — like MSI status from H&E slides. Needs validation against a gold-standard reference test.

- Class C1: Novel prognostic markers derived entirely by AI. Needs high-quality retrospective data.

- Class C2: Novel predictive markers for therapy response. The highest bar: prospective clinical trial validation.

Source: Annals of Oncology (2026)

Three criteria are non-negotiable across all classes: ground truth, performance, and generalizability across institutions, populations, and technical setups.

EVAGENT — still under development — will address autonomous AI agents. Systems that don’t just answer questions but act: searching databases, running pathology analyses, proposing treatment strategies. ESMO explicitly states these systems “raise distinct safety, regulatory and ethical challenges” beyond what ELCAP covers.

Why EVAGENT Is Coming Faster Than You Think

The research is already ahead of the governance. A team led by Jakob Nikolas Kather built an autonomous tumor board agent and published the results in Nature Cancer (2025). The system uses GPT-4 with access to approximately 6,800 medical guidelines, PubMed, OncoKB, pathology AI for mutation detection, and imaging AI for radiology analysis.

GPT-4 alone scored 30% on clinical decision-making accuracy. The tool-augmented agent scored 87%. The difference isn’t the model — it’s the ecosystem of tools the agent can orchestrate.

That gap — from 30% to 87% — is why EVAGENT exists. When AI agents operate at this level of complexity, the governance frameworks designed for chatbots and scoring algorithms simply don’t apply anymore.

Where the EU AI Act Ends and ESMO Begins

The EU AI Act provides legal scaffolding. ESMO’s frameworks are the operating manual for oncology.

The EU AI Act says: validate high-risk systems. EBAI defines what “validated” means for a Class C2 predictive biomarker in lung cancer. The EU AI Act requires transparency. ELCAP provides 23 specific consensus statements on how clinicians should interact with LLMs daily.

There are gaps worth knowing. Current regulation expects medical devices to serve a single purpose — which creates friction with multimodal foundation models. Reimbursement policies don’t yet account for AI-driven diagnostics. And ELCAP itself acknowledges a “striking lack” of empirical evidence on patient-facing LLM safety. The rules exist, but the evidence base needs to grow.

What to Do With This

These frameworks aren’t academic exercises. They’re becoming the credibility language for AI in oncology. Here’s how to use them:

- Map your AI tools to ELCAP. Every LLM-based tool your organization uses — medical information chatbots, internal summarization, HCP-facing decision support — falls into Type 1, 2, or 3. Know which tier. Know the evidence requirements.

- Classify your biomarker AI with EBAI. If you work with AI-based companion diagnostics or digital pathology scoring, understand whether you’re Class A, B, C1, or C2. This determines what KOLs and regulators will expect.

- Prepare for EVAGENT. Autonomous agents are arriving faster than most organizations anticipate. Understanding the evaluation framework before it’s published is a genuine advantage.

- Speak the language. When you discuss AI with KOLs, advisory boards, or cross-functional teams, use the ESMO vocabulary. It signals that you understand the governance landscape — not just the technology.

- Contribute to the gaps. Reimbursement, evidence for patient-facing tools, regulatory harmonization — these are open problems where Medical Affairs has both the expertise and the stakeholder relationships to drive the conversation.

The Bigger Picture

ESMO isn’t waiting for regulation to catch up. They’re writing the rules themselves — a layered, interconnected governance system that covers LLMs, AI biomarkers, and autonomous agents under one coherent architecture.

For Medical Affairs, this is a strategic moment. The teams that learn this playbook early won’t just be compliant — they’ll be the ones shaping how AI enters oncology practice. And in a field where the treatment landscape changes quarterly, that kind of positioning isn’t optional. It’s how you stay relevant.

References

- Wong EYT, Verlingue L, Aldea M, et al. ESMO guidance on the use of Large Language Models in Clinical Practice (ELCAP). Annals of Oncology. 2025;36(12):1447–1457. doi:10.1016/j.annonc.2025.09.001

- Aldea M, Salto-Tellez M, Marra A, et al. ESMO basic requirements for AI-based biomarkers in oncology (EBAI). Annals of Oncology. 2026;37(3):414–430. doi:10.1016/j.annonc.2025.11.009

- Ferber D, El Nahhas OSM, Wölflein G, et al. Development and validation of an autonomous artificial intelligence agent for clinical decision-making in oncology. Nature Cancer. 2025;6(8):1337–1349. doi:10.1038/s43018-025-00991-6

- Truhn D, Azizi S, Zou J, et al. Artificial intelligence agents in cancer research and oncology. Nature Reviews Cancer. 2026;26(4):256–269. doi:10.1038/s41568-025-00900-0

This article was co-authored with Anthropic’s Claude Opus 4.6 model. The ideas, domain expertise, and editorial direction are mine — the AI helped structure, draft, and refine the text.